Seed3D 2.0 Released: Higher Precision and Greater Usability

Seed3D 2.0 Released: Higher Precision and Greater Usability

Date

2026-04-23

Category

Models

High-quality, large-scale 3D content is becoming critical infrastructure in fields such as embodied AI and industrial manufacturing. However, 3D content generated by previous methods still falls short of production-grade requirements in terms of geometric precision and material realism.

Last year, Seed3D 1.0 introduced the end-to-end generation of high-quality 3D models from a single image and achieved breakthroughs in texture generation. Today, we are officially releasing Seed3D 2.0, our next-generation 3D generative model with even higher precision. Our team has upgraded the model's architecture, focusing on geometric precision and material quality, while also expanding the downstream usability of the 3D content. Our goal is to further push 3D generation closer to being truly "professional quality".

In comparative evaluations against existing 3D generative models, Seed3D 2.0 achieved SOTA results in two core metrics: geometry generation and texture/material generation. The model reconstructs complex structures with finer detail, and its generation of PBR (Physically Based Rendering) materials delivers greater realism and stability.

The technical report for Seed3D 2.0 is now published, and the API is live on Volcano Engine. You can visit our project homepage to learn more. We invite you to try it out and share your feedback!

Project homepage:

https://seed.bytedance.com/seed3d_2.0

Access path:

Volcano Ark Experience Center → Log in → Select "Vision Model" → "3D Generation" → Doubao-Seed3D-2.0

1. Geometry Generation: Introducing a Two-stage DiT — From Plausible Structures to Reliable Details

The geometric and texture quality of 3D content are the two core dimensions that determine a model's usability. In particular, geometric quality directly determines whether an object's structure is plausible, making it a key dividing line between "usable" and "unusable" generated content. In Seed3D 1.0, the model had to generate both the "overall structure" and "fine details" simultaneously. While this approach could quickly capture the overall shape of an object, it occasionally suffered from a "softening" effect on sharp edges and fine structures, resulting in edges that were insufficiently straight and curved surfaces that lacked precision.

The complete workflow of geometry generation in Seed3D 2.0

Seed3D 2.0 addresses this by introducing a Coarse-to-Fine, two-stage generation strategy that decouples "overall structure" from "fine details", allowing them to be optimized separately. This breakthrough tackles major geometry generation challenges, such as sharp edges, thin-walled structures, and complex topologies:

Stage 1: coarse geometric structure generation. The model uses a DiT with a larger parameter scale to generate coarse-grained geometric structures from the input image, establishing the overall topological relationships and spatial layout.

Stage 2: high-precision detail generation. Using the output of Stage 1 as geometric anchors, the model focuses on recovering details such as sharp edges and refined surfaces. To achieve this, it introduces two key priors:

Local-aware prior: The coarse results from Stage 1 are converted into latent variables to provide a reliable initialization for subsequent detail generation, avoiding the instability caused by "generating from scratch".

Voxelized positional encoding: Points are sampled on the geometric surfaces generated in Stage 1 and voxelized. These serve as positional encodings to provide the model with spatial constraints.

Additionally, Seed3D 2.0 includes a corresponding upgrade to its VAE, achieving higher reconstruction fidelity with fewer tokens. By enhancing the detail expression of local regions and dynamically allocating attention based on content, we have significantly boosted both the reconstruction fidelity and inference efficiency of the VAE model.

As shown in the figure below, qualitative comparisons with leading 3D generative models demonstrate that Seed3D 2.0 significantly outperforms baseline methods in rendering fine edges in complex geometries, generating thin-walled structures, and maintaining fidelity to the input image.

Qualitative comparison of geometry generation

Furthermore, we recruited 60 evaluators with 3D modeling experience to conduct blind pairwise comparisons of generation quality between Seed3D 2.0 and 6 baseline models across approximately 200 test cases. In comparative tests of geometry generation, Seed3D 2.0 demonstrated a clear advantage, achieving a higher preference rate (the percentage of cases in which evaluators rated its generation quality as superior) compared to all other 3D generative models. This validates the geometric quality improvements enabled by our architectural innovations.

The percentage of evaluators who preferred Seed3D 2.0 over leading models in geometry generation tasks

2. Texture Generation: A Unified PBR Model — From Visual Similarity to Physical Consistency

Beyond geometric precision, the realism of textures and materials directly determines the quality of 3D content. Particularly in downstream applications, an RGB appearance alone is far from sufficient. A complete set of PBR materials is required to ensure that the 3D content maintains physically consistent visual effects under varying lighting conditions.

Seed3D 1.0 employed a cascaded model for RGB generation and PBR decomposition, where errors from intermediate steps would accumulate and compromise the final material quality. In Seed3D 2.0, we simplified this into a unified PBR generative model. By retaining the MMDiT dual-stream architecture and using modality-specific projection layers, the model can jointly process the full set PBR texture maps within shared DiT layers.

The texture generation workflow in Seed3D 2.0

Building on a unified generation architecture, we have also introduced two key innovations to achieve precise material generation at higher resolutions:

MoE architecture refines the details of high-resolution texture and sharpens boundaries: Limited by its output resolution, Seed3D 1.0 struggled to preserve details during material decomposition. Furthermore, directly scaling up the model's parameters and resolution would incur prohibitively high computational overhead. Therefore, we adopted a Mixture of Experts (MoE) architecture, which expands model parameters and resolution while controlling inference computation through sparse expert routing. This enables the model to generate richer texture details and more accurate metal-roughness boundaries.

Leveraging VLM priors to bolster the stability and accuracy of material decomposition under unseen lighting. Inferring PBR properties from RGB images is a major hurdle for the industry: Identical appearances can arise from different material combinations. Various issues might occur during the model inference process, such as color shifts or non-metallic regions erroneously classified as metallic. To address this, we introduced a VLM to generate descriptions of the material types and physical properties of the input image. These descriptions are then injected into the DiT as additional control signals, making the material decomposition much more stable and reasonable.

In qualitative assessments of texture generation, Seed3D 2.0 consistently outperforms baseline methods in photorealism, material quality, visual detail, and text synthesis.

For instance, on an object like a stainless steel pot, Seed3D 2.0 delivers a significantly more authentic metallic finish. It captures subtle roughness variations and faint scuff marks that mimic real-world usage, with naturally distributed highlights and consistent material logic across all parts. In contrast, other methods tend to produce overly uniform or underexposed surfaces, missing the micro-variations that define physical reality.

Qualitative Comparison of Texture Generation Dimensions

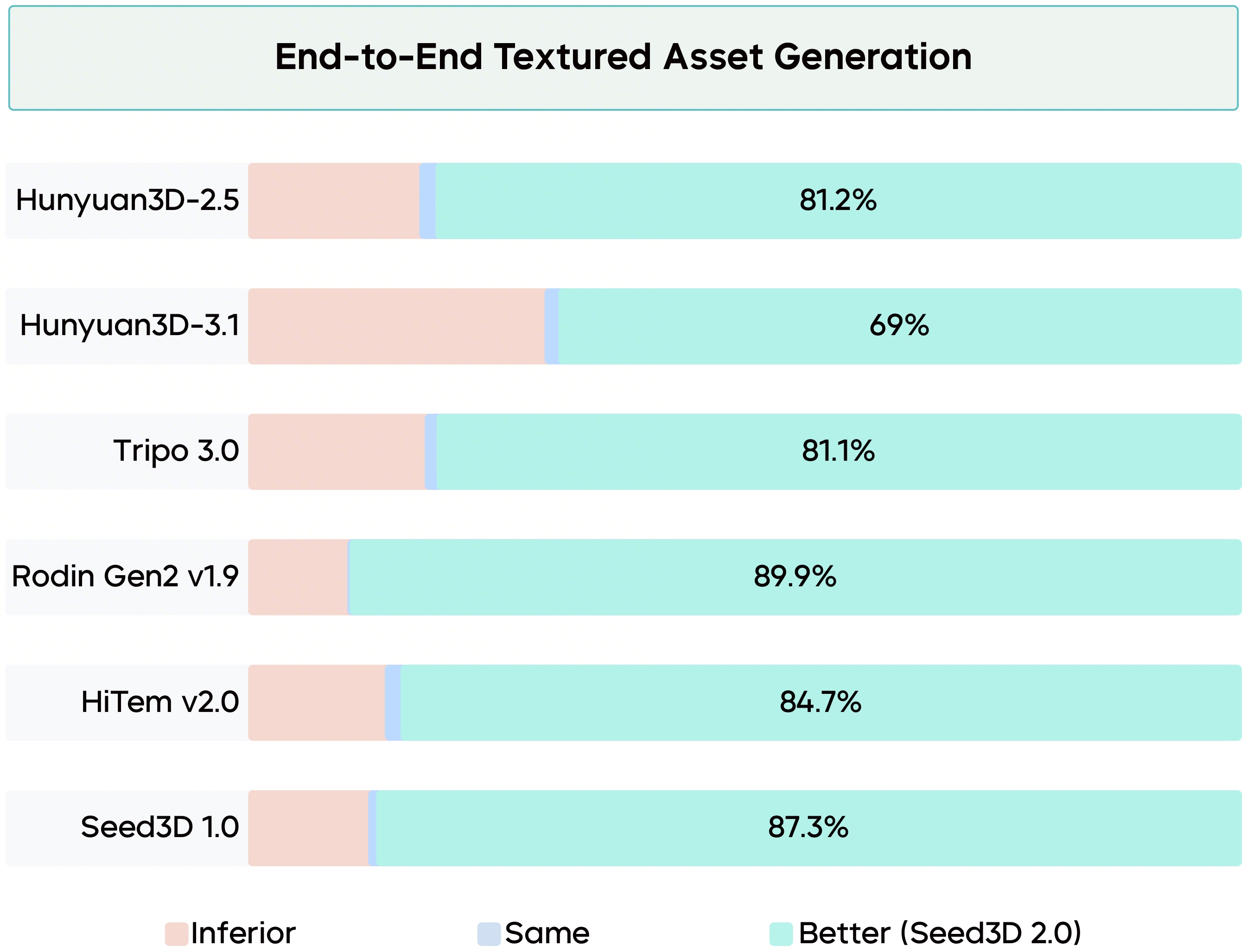

Regarding textured 3D asset generation, Seed3D 2.0 is the preferred choice in human evaluations. It achieves a dominant win rate ranging from 69.0% against mainstream industry benchmarks.

Human Preference Rates: Seed3D 2.0 vs. Baseline Models in Texture Generation.

3. Downstream Applications: Part-Level Generation and Scene Composition

Many downstream scenarios require 3D assets to be decomposed into functional components. For instance, game engines demand independently manipulable object modules in interactive systems, while simulation environments rely on articulated part structures for kinematic movement. To meet these needs, Seed3D 2.0 enhances modeling flexibility, enabling seamless assembly and decomposition of individual parts.

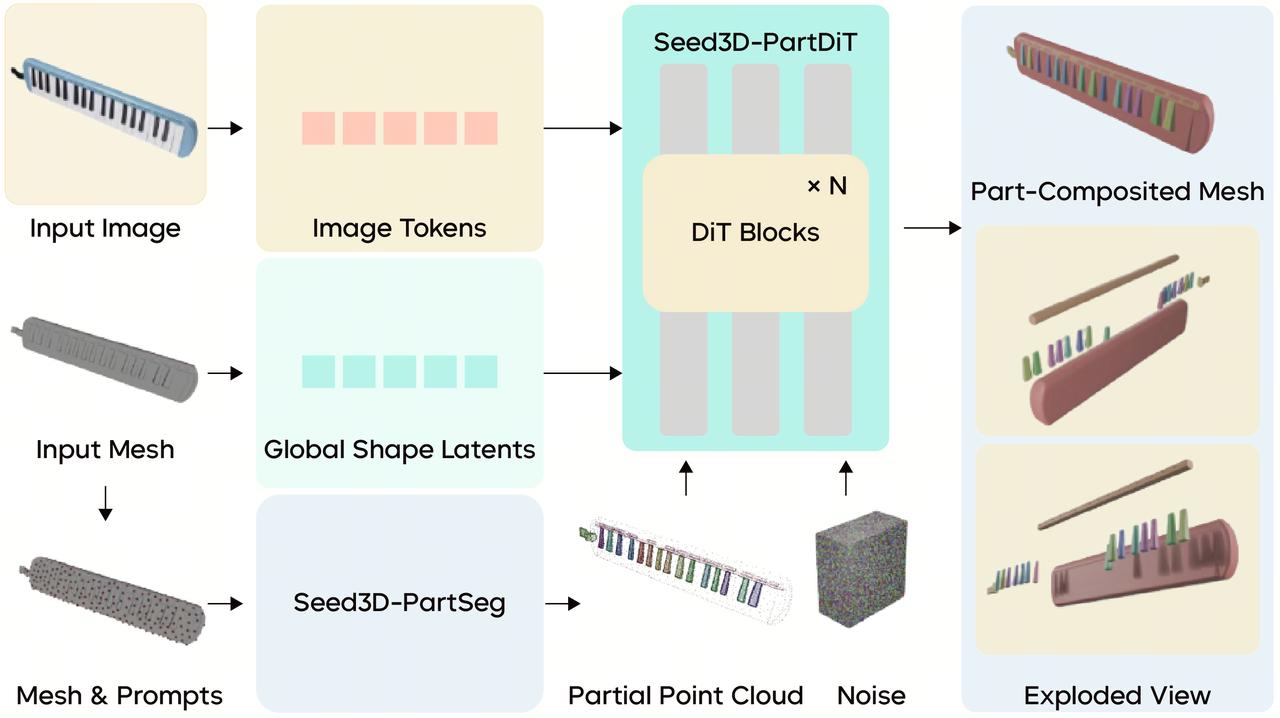

Overview of the part-level 3D asset generation

Seed3D 2.0 adopts an "understand-then-generate" paradigm, breaking down the complex part generation process into two steps: first performing part-level decomposition on the generated 3D content, and then completing the full shape of each individual part.

First, by collecting and annotating extensive specific segmentation data, Seed3D 2.0 learns how to partition 3D data based on functional dimensions and other criteria. We trained the Seed3D-PartSeg 3D comprehension module to perform surface segmentation on full 3D meshes. Building on this, Seed3D-PartDiT utilizes global 3D shape, segmented point clouds, and images as inputs to train the generative network, ultimately completing the surface segmentation results and assembling them into full part-level meshes.

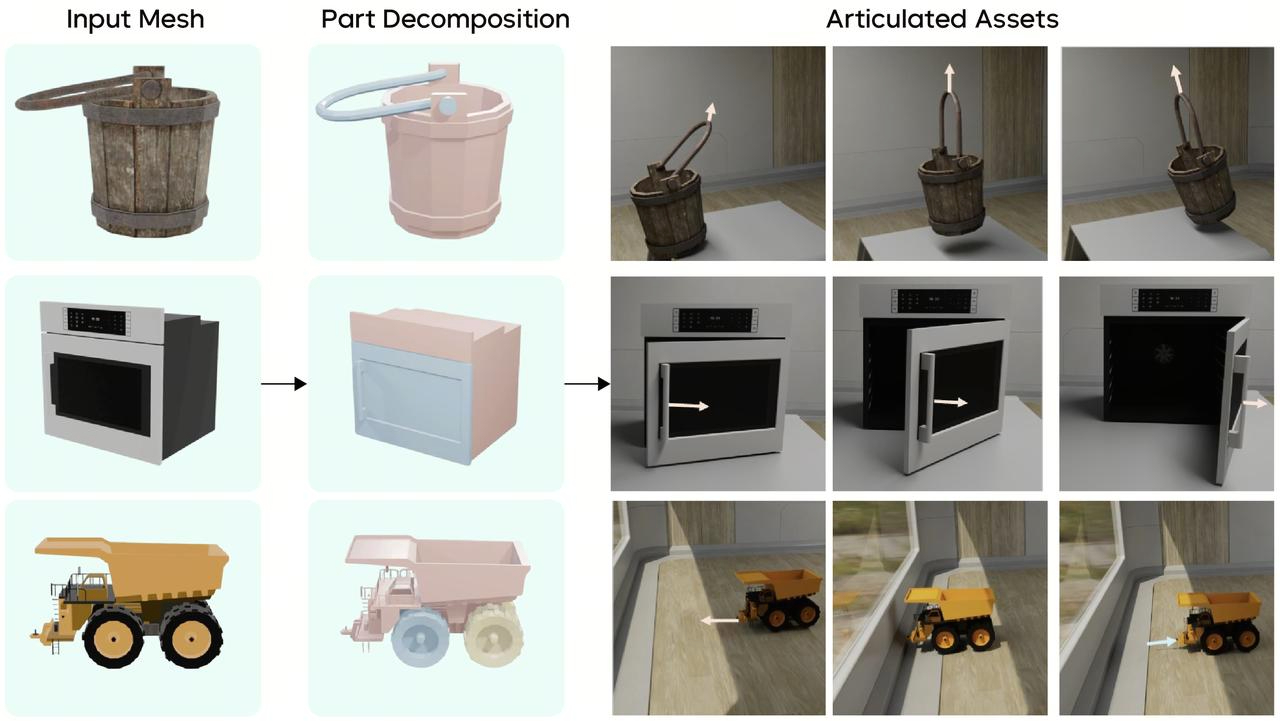

As shown in the figure, a chair is automatically decomposed into a seat, backrest, and base, while a robot was also meticulously disassembled by body parts such as limbs to facilitate detailed structural analysis and performance evaluation. This part-level representation establishes a critical foundation for subsequent high-precision interactions.

Independent parts require correct physical connections to produce meaningful interactions. Therefore, building upon part segmentation, Seed3D 2.0 further incorporates articulated modeling capabilities.

This process integrates multimodal understanding and generation technologies. The model first leverages VLMs to decompose parts into kinematic components and identify joint types (e.g., revolute parts vs. fixed structures), and then estimates joint axes via geometric priors. To ensure the physical plausibility of the motion, the model also introduces an image-to-video model to generate motion references, optimizing the range of motion for articulated parts. Ultimately, the model outputs 3D content with complete joint information in standard formats like URDF, achieving compatibility with mainstream physics simulation engines such as Isaac Sim.

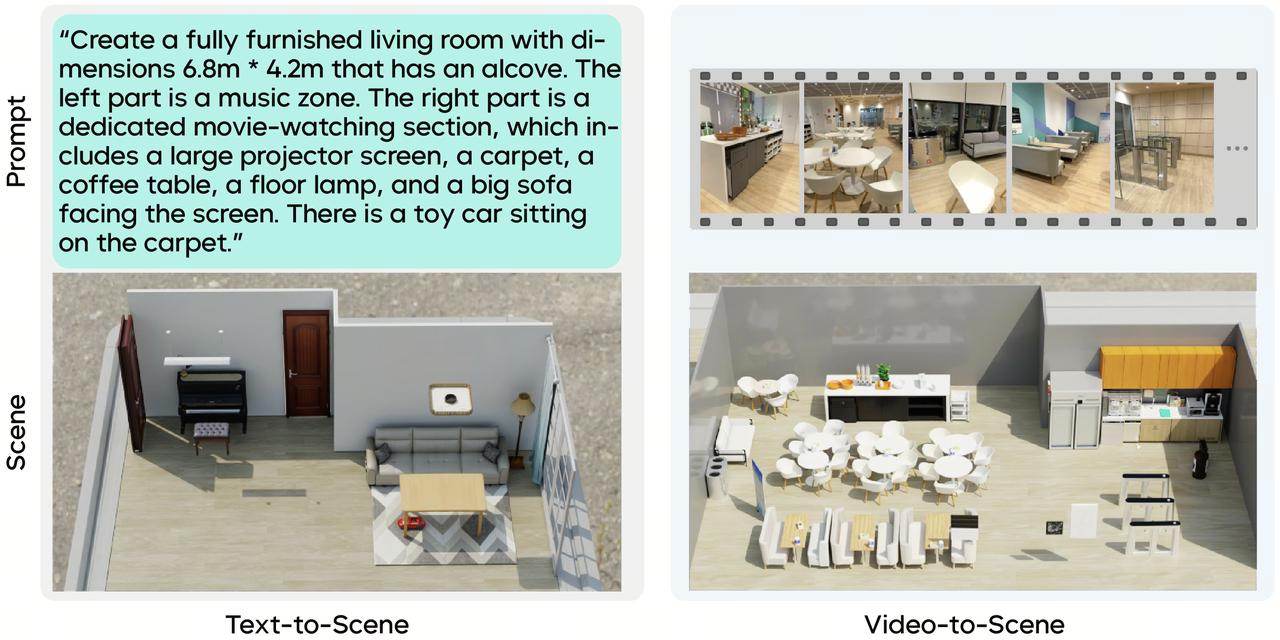

The real physical world is composed of numerous interacting objects. Therefore, Seed3D 2.0 also extends its single-object generation capabilities to scene generation.

To achieve plausible object arrangements, Seed3D 2.0 intelligently adapts its layout strategy based on different input conditions: for text inputs, it utilizes a fine-tuned LLM for spatial reasoning and layout generation; for multi-view image or video inputs, the model additionally leverages visual signals like depth estimation, along with capabilities such as instance segmentation and occlusion inpainting, to infer the scene's spatial layout. Once the layout is obtained, Seed3D 2.0 can generate 3D content individually and assemble them according to their spatial relationships to construct a rich and complete scene.

Seed3D 2.0: Simulation scene generation pipeline

Taking it a step further, by integrating the aforementioned part-level generation and articulated modeling capabilities, the final scenes generated by Seed3D 2.0 not only possess precise spatial structures, but the objects within them can also be seamlessly transformed into articulated 3D content that supports physical interactions. This lays the groundwork for downstream physics simulation scenarios.

4. Conclusion and Outlook

Seed3D 2.0 marks significant advancements in geometric precision, PBR material quality, and downstream utility. However, 3D generation continues to face a series of long-term challenges: There remains room for improvement regarding the detail precision of geometry generation and generalization; texture generation is still prone to occlusion and mapping errors; and the large-scale deployment of 3D models is often constrained by inference efficiency. Furthermore, the full spectrum of real-world use cases remains a frontier to be explored. We are committed to tackling these challenges, driving the scalable application of 3D generation technology across more diverse and complex scenarios.