Seed2.0

Seed2.0 has been systematically optimized to meet the requirements of large-scale production deployment, and is designed to help tackle complex real-world tasks.

Overview

The Seed2.0 series model has been officially released, offering three general-purpose agent models of varying sizes—Pro, Lite, and Mini.

The general-purpose models in this series deliver a comprehensive upgrade in multimodal understanding, with strengthened LLM and Agent capabilities that enable steady progression in real-world long-horizon tasks.

Seed2.0 further expands its capability frontier from competition-level reasoning to research-grade tasks, achieving first-tier industry performance in evaluations on high economic-value and high scientific-value workloads.

*Seed2.0 Lite was upgraded at the end of April. As the first omni-modal understanding model in the Seed foundation model series, it natively supports unified understanding across video, image, audio, and text, while also featuring upgraded Agent, Coding, and GUI capabilities.

Seed2.0 Pro

Focuses on long-chain reasoning and robustness in complex workflows. Optimized for complex scenarios in real-world tasks.

Seed2.0 Lite

Balances output quality and response speed.

Ideal as a general-purpose, production-grade model.

Seed2.0 Mini

Optimized for inference throughput and deployment density. Designed for high concurrency and batch generation scenarios.

Model Performance

Seed2.0 delivers significant enhancements in visual reasoning and perception, and achieves SOTA performance on benchmarks, such as BabyVision.

In dynamic video scenarios, Seed2.0 strengthens its foundational capabilities in temporal understanding and motion perception, and achieves SOTA results on multiple video reasoning benchmarks.

Seed2.0 further enhances its instruction-following capabilities and achieves top-tier industry performance when evaluated on complex Agent capabilities.

*The latest version of Seed2.0 Lite enables cross-modal reasoning that combines audio and visual information, achieving industry-leading performance on both video and audio understanding benchmarks.

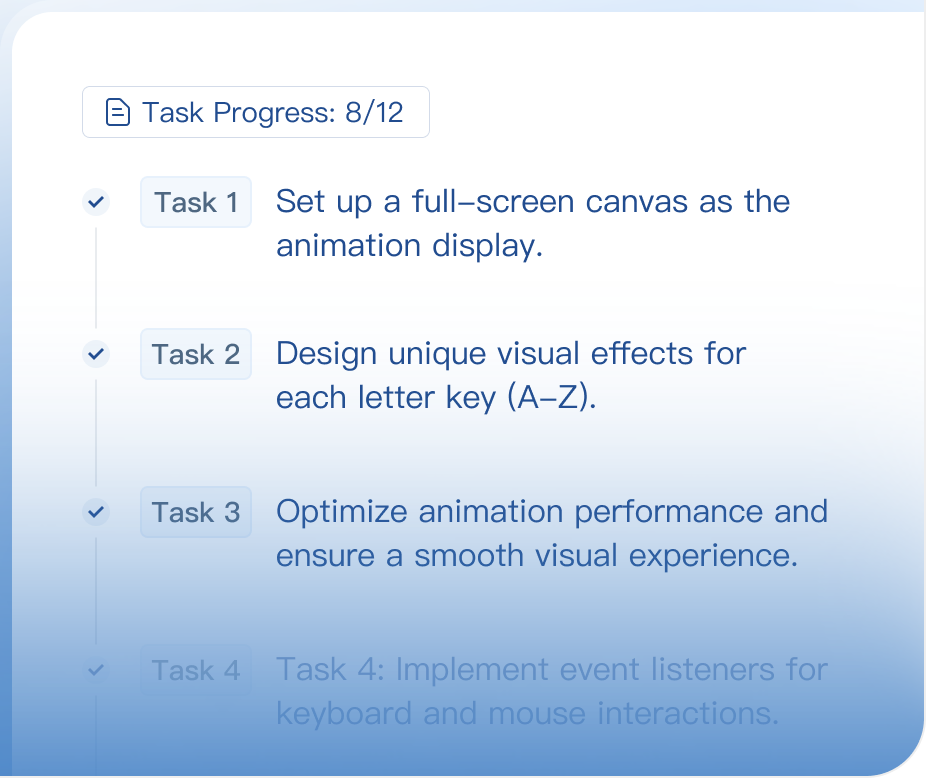

Beyond standard benchmarks, we place greater emphasis on actual user experience. Before this version was released, we invited over 50 Ark users to evaluate the Coding Agent capabilities of the latest Seed2.0 Lite model. The results show that the new version also delivers a clear improvement in this area.

Omni-Modal Understanding and Interactive Applications

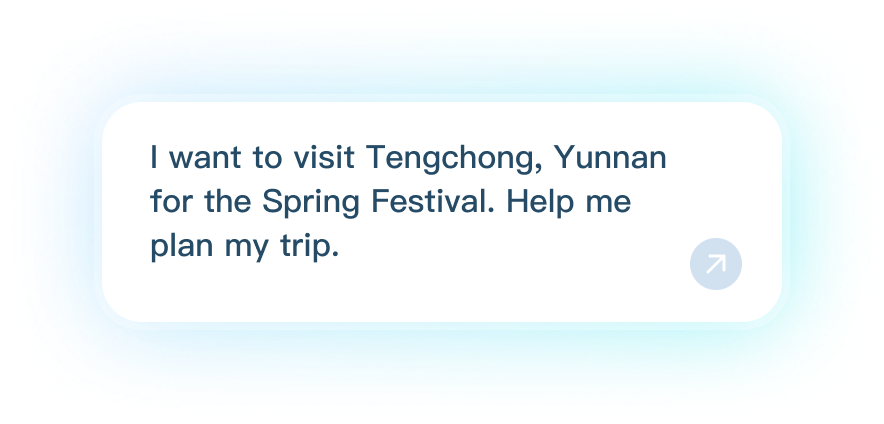

Seed2.0 can process complex visual inputs and enable real-time interaction and app generation. Whether extracting structured information from images or generating interactive content via visual inputs, Seed2.0 handles tasks fast and reliably. Additionally, with its late-April update, Seed2.0 Lite now supports audio input, enabling omni-modal understanding.

It unifies the processing of diverse audio inputs, such as speech and ambient sounds, and fuses them with visual signals from videos to deliver a holistic analysis of complex events.

Steady progression on sophisticated professional tasks

Seed2.0 significantly enhances the performance of its LLM and Agent, maintaining high stability and reliability when executing long-horizon, multi-step instructions.

Workflow Gym – FreeCAD Double Boss Modeling: Volume and Surface Area Extraction Task

Based on the FreeCAD Part Design Workbench, it completes end-to-end modeling of double boss and extraction of geometric parameters.

Workflow Gym – FreeCAD Double Boss Modeling: Volume and Surface Area Extraction Task

Evaluation Results

We conducted a comprehensive evaluation of the Seed2.0 series, which demonstrated strong performance across key tasks such as reasoning, complex instruction following, and multimodal understanding. The latest Seed2.0 Lite model shows significant performance improvements and achieves SOTA results on multiple video and audio understanding benchmarks.

*Swipe right to view all model evaluation results.

*Swipe right to view all model evaluation results.

Capability

Benchmark

Seed2.0 Lite(0428)

Seed2.0 Lite(0215)

Seed2.0 Pro(0215)

GPT-5.4 Mini

Gemini 3 Flash

-

-

Capability

Benchmark

Seed2.0 Lite(0428)

Seed2.0 Lite(0215)

Seed2.0 Pro(0215)

GPT-5.4 High

Gemini 3 Flash

Gemini 3.1 Pro High

-

Capability

Benchmark

Seed2.0 Lite(0428)

Seed1.8

Claude Opus 4.7

Claude Sonnet 4.6

Claude Sonnet 4.5

GPT-5.4 High

Gemini 3.1 Pro

Capability

Benchmark

Seed2.0 Lite(0428)

Seed2.0 Lite(0215)

Seed2.0 Pro(0215)

Seed2.0 Mini(0215)

Gemini 3 Pro High

Gemini 3 Flash High

-

Capability

Benchmark

Seed2.0 Lite(0428)

Gemini-3.1-Pro

-

-

-

-

-

Capability

Benchmark

Seed2.0 Lite(0428)

Seed2.0 Lite(0215)

Seed2.0 Pro(0215)

GPT-5.4 Mini

Gemini 3 Flash

-

-

Knowledge

GPQA Diamond

88.4%

85.1%

88.9%

88.0%

90.7%

-

-

SuperGPQA

69.6%

67.5%

68.7%

63.9%

72.7%

-

-

HLE (no tool, text only)

25.7%

28.2%

32.4%

28.2%

31.7%

-

-

Reasoning

BeyondAIME

79.0%

76.0%

86.5%

80.0%

82.0%

-

-

FrontierSci-olympiad

72.0%

70.0%

74.0%

70.0%

73.0%

-

-

Superchem (text-only)

55.0%

48.0%

51.6%

29.1%

54.4%

-

-

BABE

57.9%

50.2%

53.5%

49.0%

55.2%

-

-

Instruction Following

CL-Bench

20.1%

20.0%

20.8%

14.9%

16.1%

-

-

MultiChallenge

69.9%

63.2%

68.3%

62.5%

69.3%

-

-

SearchAgent

WideSearch

70.3%

74.5%

74.7%

73.0%

64.0%

-

-

BrowseComp

64.0%

72.1%

77.3%

61.3%

41.5%

-

-

ResearchRubrics

59.2%

50.8%

50.7%

47.1%

36.9%

-

-

XPert Bench

56.8%

63.3%

64.5%

41.8%

50.1%

-

-

Real World

SkillsBench

43.7%

42.1%

42.3%

45.4%

26.4%

-

-

GDPval

53.1%

47.3%

54.4%

50.6%

13.7%

-

-

FinSearchComp

63.8%

65.1%

70.2%

61.8%

43.7%

-

-

Tob-Agent

51.4%

45.2%

52.6%

43.0%

37.4%

-

-

CodingAgent

SWE Multilingual

66.6%

64.4%

71.7%

73.6%

71.1%

-

-

SWE-Bench Pro

46.6%

46.0%

46.9%

54.4%

46.7%

-

-

NL2Repo-Bench

28.7%

24.6%

27.9%

37.3%

27.6%

-

-

PaperBench

52.5%

54.6%

53.8%

49.1%

33.9%

-

-

Terminal Bench 2.0

43.3%

45.0%

55.8%

60.0%

60.0%

-

-

Vibe Coding 人工评估

49.4%

48.7%

48.4%

57.4%

56.9%

-

-

Capability

Benchmark

Seed2.0 Lite(0428)

Seed2.0 Lite(0215)

Seed2.0 Pro(0215)

GPT-5.4 High

Gemini 3 Flash

Gemini 3.1 Pro High

-

STEM

MathVision

89.8

86.4

88.8

90.6

87.5

89.0

-

MMMU_Pro

78.4

76.0

78.2

79.2

80.4

82.5

-

HiPhO

83.8

72.5

74.1

84.3

78.0

86.6

-

MedXpertQA-MM

79.6

64.0

68.1

76.9

78.0

80.2

-

Perception

BabyVision

64.7

57.5

60.6

53.4

47.2

54.4

-

VLMBias

80.6

74.8

77.4

42.8

66.1

73.5

-

Visual Knowledge

SimpleVQA

72.7

67.2

71.4

56.0

68.4

70.5

-

WorldVQA

50.2

44.0

49.9

30.2

46.5

44.4

-

InfoGraphics

CharXiv-DQ

94.5

93.3

93.5

94.1

94.0

94.9

-

CharXiv-RQ

82.4

79.9

80.5

82.6

79.7

84.0

-

Embodied

ERQA

71.5

65.8

68.5

64.5

65.8

70.8

-

Capability

Benchmark

Seed2.0 Lite(0428)

Seed1.8

Claude Opus 4.7

Claude Sonnet 4.6

Claude Sonnet 4.5

GPT-5.4 High

Gemini 3.1 Pro

GUI

OSWorld-Verfied

64.4%

61.9%

78.0%

72.5%

62.9%

75.0%

64.0%

MobileWorld

64.6%

52.1%

56.4%

-

47.8%

-

57.3%

Capability

Benchmark

Seed2.0 Lite(0428)

Seed2.0 Lite(0215)

Seed2.0 Pro(0215)

Seed2.0 Mini(0215)

Gemini 3 Pro High

Gemini 3 Flash High

-

Video Knowledge

VideoMMMU

88.3

84.1

86.9

80.6

87.6*

88.1

-

MMVU

76.7

75.0

78.2

69.0

76.3

77.9

-

VideoSimpleQA-v2

69.0

65.0

71.5

64.9

-

-

-

VideoSimpleQA

71.7

66.6

71.9

67.7

72.4

70.0

-

SciVideo

70.3

51.4

52.3

35.3

-

74.1

-

Video Reasoning

VideoReasonBench

59.4

64.2

77.8

40.5

59.5

61.2

-

VideoHolmes

67.4

63.8

67.4

58.6

64.2

65.6

-

Minerva

68.5

63.8

66.5

54.7

65.0

64.4

-

Motion & Perception

TVBench

80.4

71.5

75.0

70.5

71.1

69.6

-

TOMATO

72.5

57.3

59.9

47.4

55.8

60.8

-

EgoTempo

68.4

61.8

71.8

67.2

65.4

58.4

-

MotionBench

72.4

70.9

75.2

65.1

70.3

68.9

-

ContPhy

62.4

56.1

67.4

55.9

61.1

62.0

-

Morese-500

34.6

32.2

37.4

32.2

33.0

32.4

-

Long Video

VideoMME

89.0

87.7

89.5

81.2

88.4*

85.2

-

VideoMMEv2

64.9

-

60.5*

-

66.1*

61.1*

-

CGBench

65.5

59.3

65.0

-

65.5

65.3

-

LongVideoBench

79.0

77.3

80.3

74.8

78.2

74.5

-

LVBench

76.4

73.0

76.4

66.6

-

-

-

VideoEval-Pro

49.5

44.3

47.3

43.7

-

51.9

-

Streaming Video

OVBench

63.2

65.5

69.2

60.1

63.5

59.2

-

ODVBench

66.0

69.6

72.5

65.1

63.6

56.7

-

LiveSports-3K

78.1

77.8

78.0

73.3

74.5

73.2

-

OVOBench

75.4

76.7

77.0

70.4

70.1

68.7

-

ViSpeak

87.0

84.0

78.5

77.5

89.0

88.0

-

Multi-video

CrossVid

63.7

57.7

61.0

58.6

53.0

48.7

-

Visual-Audio Understanding

OmniVideoBench

61.7

44.5

49.5

40.8

61.4(3.1Pro)

-

-

AVMeme

69.5

60.6

61.2

50.7

77.3(3.1Pro)

-

-

JointAVBench

69.5

56.7

62.3

52.7

-

-

-

WorldSense

67.3

57.0

57.0

52.7

65.5(3.1Pro)

-

-

Capability

Benchmark

Seed2.0 Lite(0428)

Gemini-3.1-Pro

-

-

-

-

-

Audio Understanding

MMSU

86.54

85.94

-

-

-

-

-

WildSpeech

75.81

75.41

-

-

-

-

-

ASR

WenetSpeech test-net

4.47

9.52

-

-

-

-

-

WenetSpeech test-meeting

5.31

12.80

-

-

-

-

-

Librispeech test-clean

1.07

1.94

-

-

-

-

-

Librispeech test-other

2.17

3.60

-

-

-

-

-

S2TT

Fleurs(15 langs)(zh/en<->xx)

74.70

73.14

-

-

-

-

-

ASR performance is evaluated using WER/CER, where lower values indicate better performance.